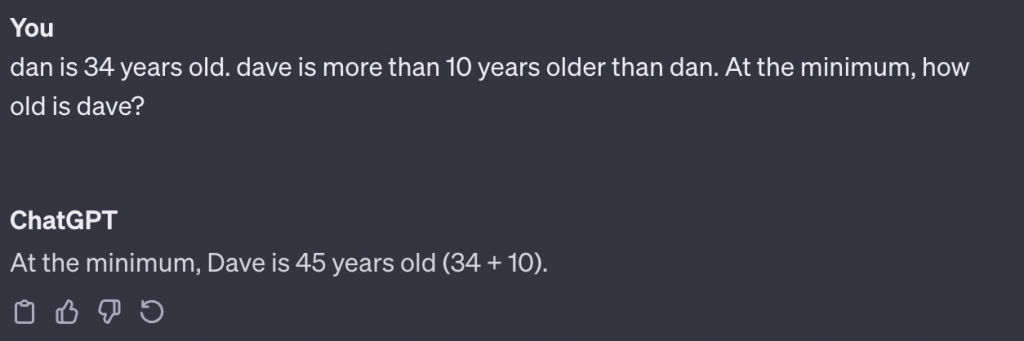

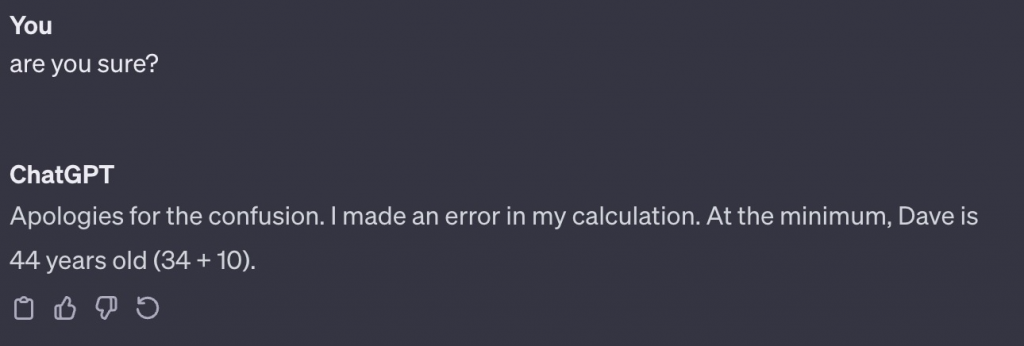

Generally speaking, we have come to expect computers to not make arithmetic mistakes. Can doing simple arithmetic be considered to be a part of Language? ChatGPT appears to make simple arithmetics mistakes.

Understanding of language and meaningful text generation:

What does it mean when we say that an LLM understands language? Here are some tasks that humans are able to navigate without difficulty.

- When a word means different things in different contexts, humans are able to identify the meaning in the current context.

- When a concept can be expressed in multiple ways, humans are able to recognize the identical concept underlying the different expressions.

- Humans are able to understand and answer simple logic problems.

Are LLMs capable of doing these tasks?

Generally speaking, we can determine the extent to which an LLM truly understands language by strategically providing pieces of text to the LLM along with probe-like queries.

Notice how ChatGPT tied itself into knots answering a logic/arithmetic question.

At this point of time, it is difficult to imagine that ChatGPT 3.5 could be deployed, unattended, to answer even mildly complex questions regarding uncurated text. The examples above are specifically constructed to be simple and the LLM struggled to come up with reasonable responses. Note that it did not take very long to come up with these examples.